Nscale Raises $2 Billion in Series C — the Largest in European History

See More

4 out of 5 developers ranked us as the most cost-effective GenAI inferencing provider - with access to popular models and zero rate limits.

![“A dark grid showcasing AI models categorized by their functionality. Top row: ‘Text Generation’ with ‘LLAMA 3.2 11B Instruct’ by Meta, ‘LLAMA 3 70B Instruct’ by Meta, and ‘Mixtral 8x22B Instruct’ by Mistral AI. Bottom row: ‘Text Generation’ with ‘AMD LLAMA 135M’ by AMD, ‘Text-to-Image’ with ‘Stable Diffusion 3 Medium’ by Stability AI, and ‘Text-to-Image’ with ‘Flux.1 [Schnell]’ by Black Forest Labs.”](https://cdn.prod.website-files.com/666078e26595dfe9b1e8171f/6737719462cb3cb2d0e304b7_severless-feature-1.avif)

No rate limits, no cold starts, and no waiting - just fast, reliable inference with automatic scaling built to handle any AI workload. We handle scaling, monitoring, and operations behind the scenes, so your team can focus on building.

Nscale Serverless Inference is a fully managed platform that enables AI model inference without requiring complex infrastructure management. It provides instant access to leading Generative AI models with a simple pay-per-use pricing model.

This service is designed for developers, startups, enterprises, and research teams who want to deploy AI-powered applications quickly and cost-effectively without handling infrastructure complexities.

Nscale follows a pay-per-request model:

- Text models: Billed based on input and output tokens.

- Image models: Pricing depends on output image resolution.

- Vision models: Charged based on processing requirements.

- New users receive free credits to explore the platform.

No infrastructure hassles: We handle scaling, monitoring, and resource allocation.

Cost-effective: Our vertically integrated stack minimises compute costs.

Scalable & Reliable: Automatic scaling ensures optimal performance.

Secure & Private: No request or response data is logged or used for training.

OpenAI API & SDK compatibility: Easily integrate with existing tools.

Nscale automatically adjusts capacity based on real-time demand. There’s no need for manual configuration, making it easy to scale applications seamlessly.

Cost per token is an important metric for evaluating AI inference TCO. It is the measure of what your infrastructure actually delivers. Input metrics like hourly GPU pricing or FLOPs per dollar tell you what you're spending or what's theoretically possible, but cost per token captures a broader picture: hardware performance, software optimization, and real-world utilization in a single number. Nscale's full-stack approach is designed to maximize token throughput across every deployment model, from multi-year private cloud to self-serve on-demand, giving you more useful output from your budget.

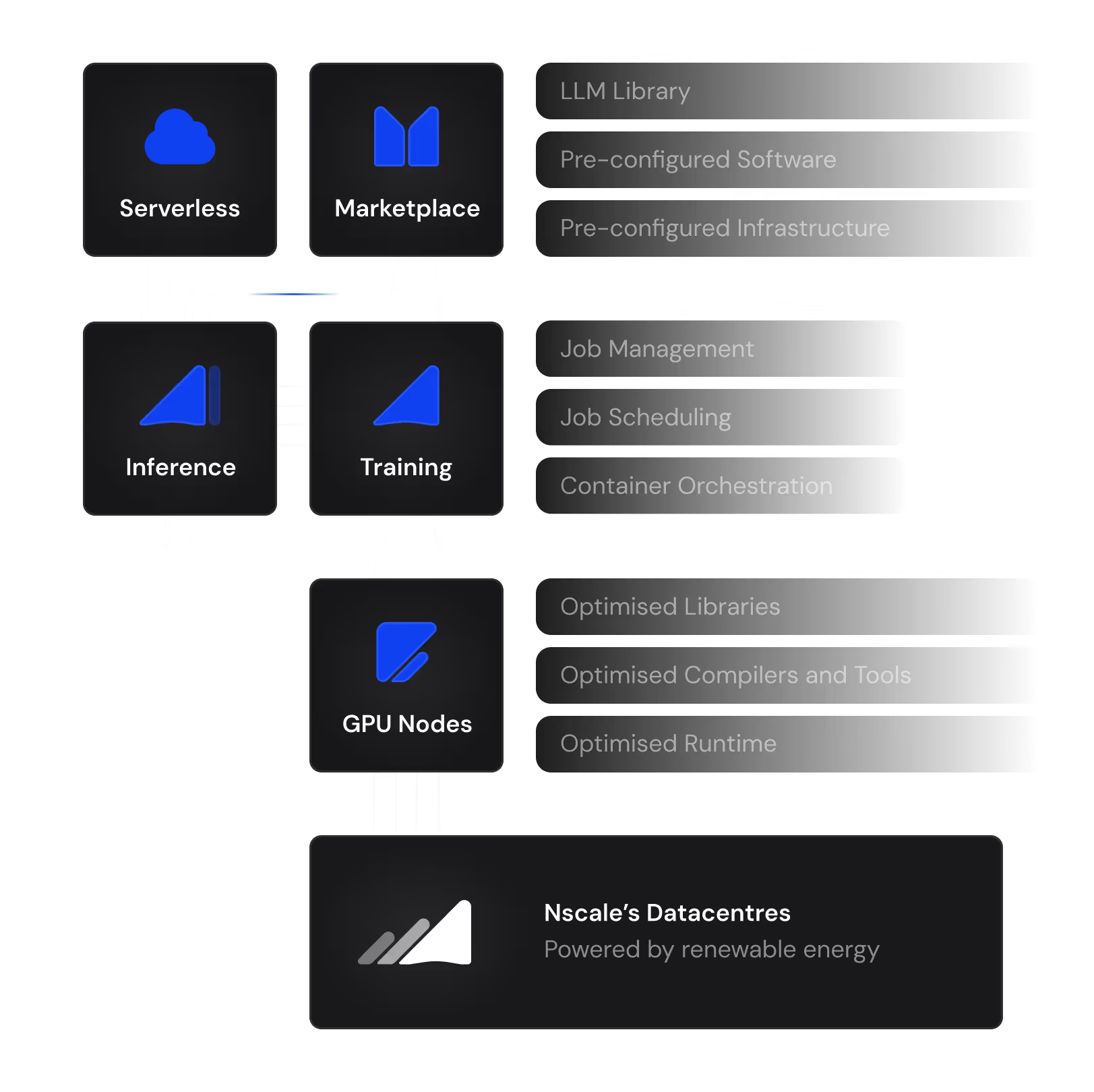

Nscale's vertically integrated, full-stack approach is engineered to maximize delivered token output. Built on the latest architectures, including the NVIDIA Blackwell and NVIDIA Blackwell Ultra platforms, Nscale combines infrastructure efficiency with software optimization to drive down cost per token at every layer. For consumption-based customers, this translates directly into better economics per token. For reserved deployments, it means more useful output from every GPU-hour under contract.

Cost per token is an important metric for evaluating AI inference TCO. It is the measure of what your infrastructure actually delivers. Input metrics like hourly GPU pricing or FLOPs per dollar tell you what you're spending or what's theoretically possible, but cost per token captures a broader picture: hardware performance, software optimization, and real-world utilization in a single number. Nscale's full-stack approach is designed to maximize token throughput across every deployment model, from multi-year private cloud to self-serve on-demand, giving you more useful output from your budget.

Nscale's vertically integrated, full-stack approach is engineered to maximize delivered token output. Built on the latest architectures, including the NVIDIA Blackwell and NVIDIA Blackwell Ultra platforms, Nscale combines infrastructure efficiency with software optimization to drive down cost per token at every layer. For consumption-based customers, this translates directly into better economics per token. For reserved deployments, it means more useful output from every GPU-hour under contract.